VIVE 3DSP Audio SDK

VIVE 3DSP Audio SDK is an audio solution for simulating realistic sounds in the virtual world. The SDK could be used to create engaging and immersive spatial audio for VR apps or experiences. A host of features are designed for developers.

Some of the unique features of VIVE 3DSP Audio SDK:

-

Highly optimized 3rd order HOA is implemented with very low computing power.

Head-Related Transfer Function (HRTF)

The precise HRTF is modelled in this SDK for every 5 degree angles.

-

The reflection and reverberation of a real space are simulated in the room audio model.

-

The quality is kept when Hi-Res audio is processed with VIVE 3DSP Audio Features.

-

The different models are adopted to create the different sound decay phenomena.

-

The super-efficient occlusion model works well without any Unity collider. Moreover, the virtual sound wave could be partially occluded according to the obstacle geometry.

-

The AmbiX file is supported in the SDK.

VIVE 3DSP Audio SDK is hardware-independent, high-quality, user-friendly, and powerful. Outstanding sound experiences can be provided especially for VIVE devices.

Higher Order Ambisonics (HOA)

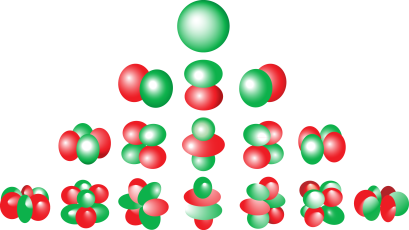

Ambisonics is the technology that uses a full-sphere surround sound technique to simulate spatial sound. Spherical harmonics is presented as the sound pressure changes around the listener.

The order of the spherical harmonics is also called the order of Ambisonics. The order of Ambisonics has a big influence on the realistic depiction of the direction of sound. Therefore, 3rd order Ambisonics is used in the spatial audio model as it generates the better directivity of a spatial sound.

When the higher order is used, higher computing power is required for the Ambisonic process. However, as a patent-pending technique is used on the VIVE 3DSP Ambisonic process, it just requires a low computing power similar to the 1st order Ambisonic.

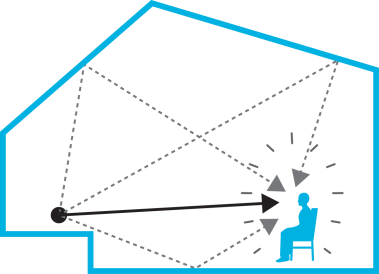

Room Audio

Room Audio is the technology that simulates the sound feelings in a room such as the early reflection, late reverberation, ambient sound, and others. The material (e.g. wood, concrete, glass, etc.) of the walls in a room affects the sound reflections in the early stage of the traveling sound. After that, the sound encounters more reflections and mixes with the background sound and finally turns into late reverberation. Furthermore, binaural acoustics should be simulated especially when a human is located at the corner of the room. Moreover, there should be a lot of low-level sound like the sound from a refrigerator or an air conditioner, therefore the phenomena is also presented in Room Audio technology.

Note

Room audio only works when the audio source and listener are in the same room.

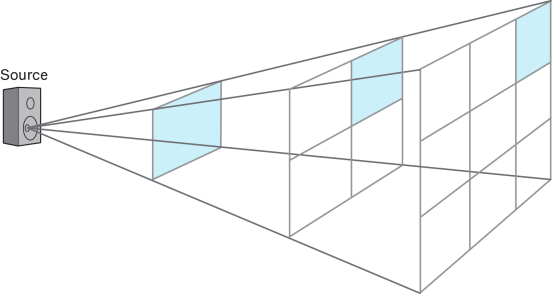

Sound Decay Model

During the sound wave propagation, the sound energy changes according to the distance. The inverse square law is a conventional method for this scenario to determine the change in the sound levels. However, the capability of the sound decay varies depending on the frequency. Point source, line source and linear curve attenuation models are provided.

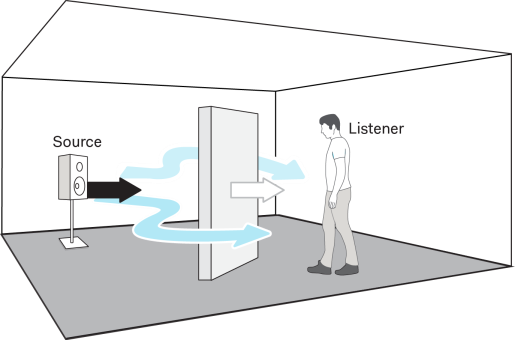

Occlusion

The occlusion effect is adopted to simulate a phenomena when a sound wave encounters an obstacle. The resulting sound is produced depending on the properties of the obstacles, like the material and thickness.

In some cases, the obstacle asymmetrically occludes the sound transmission between a sound source and a human head. For more realistic experiences, binaural occlusion is considered in VIVE 3DSP Audio SDK to produce different sound feelings for both ears.

In this SDK, a high-precision analytical geometry technique is present in occlusion effect. The occluded-sound effect can be presented according to some geometric factors of the obstacles, such as positions, rotations, sizes, and shapes. It also performs efficiently without using any amount of physics engine of other platforms like Unity or Unreal Engine.

Ambisonic Decoder

Audio recordings for 360 and VR videos are now mostly achieved by sound field microphones in the 4-channel B-format. Here, these channels are not translated to a fixed representation of sound. Instead, the Ambisonic decoder can interpret and reshape the sound recordings such that it can dynamically render a stereo audio according to a listener’s rotation.